Facebook has agreed to tweak some of its policies in response to recommendations from the Oversight Board. The board issued its first round of decisions about content moderation decisions last month in a series of decisions that undid some of Facebook’s original actions. In addition to making these decisions for a handful of specific locations, the board also made recommendations on how the social network might change its policies.

Now Facebook has responded to these suggestions. The company says it has committed to following 11 of the board’s recommendations, including updates to Instagram’s nudity policy. In other areas, such as the suggestion that Facebook notify users when moderation decisions are the result of automation, the company has not yet committed to making permanent changes.

Of the areas where Facebook says it is “obliged” to change, they are less political changes than promises to increase “transparency” about the existing rules. In this context, Facebook will clarify the rules for misinformation in the health sector, e.g. For example, the latest updates to vaccination guidelines, which indicate the types of claims the company will remove. Facebook is also planning to set up a new transparency center for users to better explain community standards. The company said it would “share more information about our policy on dangerous people and organizations,” but it “is reviewing the feasibility” of recommending that the company list the groups and individuals covered by the rules.

One area where Facebook has agreed to a major change is Instagram’s nudity policy. It now enables “health-related nudity” after Facebook restored a post from a user who posted photos to help raise awareness about breast cancer.

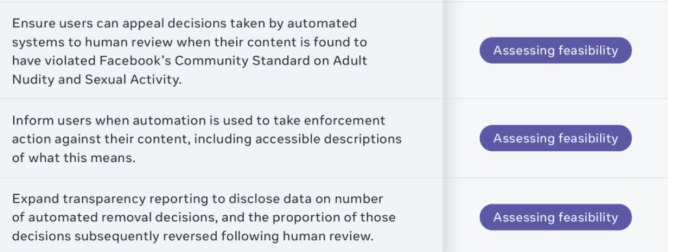

Facebook’s use of automation tools in making content moderation decisions was also mentioned in several of the board’s recommendations. The board had said that Facebook should let users know if enforcement is the result of automation, not human content review. The social network says it will “test the board’s recommendation to let people know when their content will be removed by automation,” but no permanent commitment has been made.

Facebook is less authoritative on how to use automation in content moderation decisions.

The only area Facebook refused to make changes is on the Coronavirus Misinformation Policy. The Oversight Board had decided that Facebook should resume a French user post falsely claiming that hydroxychloroquine could cure COVID-19. The board also recommended that Facebook take “less intrusive measures” to deal with misinformation about the pandemic when “the potential for physical harm is recognized but not imminent”.

However, in its most recent response, Facebook said that while it would make the rules about coronavirus misinformation clearer to users, it would not change how it enforces them. “We will not take any further action on this recommendation as we believe we are already using the least intrusive enforcement measures given the likelihood of impending harm,” Facebook wrote. “We have restored the content based on the binding decision of the board. We will continue to rely on extensive consultations with leading health authorities to learn what is likely to contribute to imminent physical harm. This approach will not change during a global pandemic. “

While Facebook’s answer isn’t exactly surprising, it does provide a glimpse into the social network’s perspective on the oversight board. Facebook has compared the independent board with its “Supreme Court” and like a court its decisions should be binding. However, Facebook has significant leeway in adopting the broader policy changes recommended by the board. That Facebook took over some but only agreed to consider others suggests that it is at least a little reluctant to let the board have too much influence over Facebook’s broader political structure.

The company’s response comes as it prepares for the Oversight Board’s decision on whether or not to restore Donald Trump’s account. This board has not specified exactly when it will decide on this matter, but a decision is expected in the next few weeks.